Sustaining AI Adoption on Your Team: Moving from Launch to Long-Haul Momentum

Your organization launched the tools. Ran the trainings. Clarified the policies. Maybe even branded your AI initiative to rally employees and excite stakeholders.

Now what?

Three Brutal Truths About AI Adoption

- For many organizations, AI remains more of a talking point than a true driver of change in daily work, employee experience or customer service.

- With a thoughtful, risk-aware approach, adoption may not be straightforward or fast.

- Employees will always be at different stages – some experimenting, some integrating AI into workflows, some skeptical or uncertain, and many shifting between these states as priorities and information evolve.

Your Role as a Leader

That’s where leaders – C-suite members, team leads and managers alike – come in. With AI adoption – a business transformation that carries emotional baggage, operational challenges and even existential questions – leaders have a responsibility to guide their people through the hype and toward something practical that drives business value.

What You Should Get from This Article

This piece closes out our 2025 series on AI adoption. The first article mapped out readiness across culture, leadership, knowledge and infrastructure. The second examined why adoption stalls, unpacking hesitations at the enterprise, team and individual levels. The third highlighted risks when communication and leadership lag behind technology.

Those pieces focused on the big picture and the organizational must-haves. This one assumes those foundations are in place. It gets more tactical – outlining what leaders can do with their teams to move from launch to long-haul momentum.

Ultimately, sustaining adoption comes down to three things: reinforcement, relevance and reflection.

1. Reinforcement: Make AI Part of Everyday Routines

After rollout, leaders must embed AI into daily routines, not treat it as a one-off initiative.

Practical ways leaders can reinforce AI:

- Build in five minutes during team meetings for questions, concerns and hesitations related to AI use. Consider launching a dedicated channel, email thread or chat on your company’s collaboration platform so team members can share resources and ideas in real time. Funnel what you hear to the cross-functional team responsible for driving adoption.

- Identify and empower an AI champion – ideally, someone curious, willing to advocate and experiment, and who is influential on the team. Position this role as a professional development opportunity.

- Integrate AI into performance conversations and onboarding so it’s part of every team member’s role, not an optional add-on. Encourage people to rethink their work – and how that work gets done – in ways that push your team’s objectives forward.

If reinforcement isn’t visible in everyday conversations, adoption will stall. Leaders should pay attention to whether AI is being treated as optional – and redirect if it’s not yet treated as an expectation.

2. Relevance: Tie AI Directly to the Work People Do

Adoption won’t stick if AI feels abstract or disconnected. It has to feel useful in the context of actual work.

Practical ways leaders can make AI relevant:

- Share your own AI examples regularly – where it saved time, where it added value and, equally importantly, where it didn’t and why. Use existing channels – chat, email, 1:1s with direct reports and team meetings – to socialize your learnings.

- Engage the team in solving challenges and capitalizing on opportunities together. For example, run bi-weekly brainstorming sessions where team members bring problems and explore whether AI can help address them.

- Recognize small wins so adoption feels attainable – and do the same with failures so the team can learn from what didn’t work. Spotlight and reward team members who solve customer challenges, improve processes or identify new use cases.

Relevance ensures employees see AI as a tool for them – not just for the company. Leaders should surface challenges, encourage collaboration and keep examples concrete and tied to team goals.

3. Reflection: Measure What Actually Matters

Tracking logins shows activity – but not necessarily maturity. Leaders need to move beyond superficial usage metrics and measure whether adoption is building confidence, capability and alignment with business objectives.

Practical ways leaders can reflect on adoption:

- Run short (potentially anonymous) monthly pulse surveys with two or three questions that gauge clarity of your company’s AI strategy, how it connects to employees’ work, and confidence in using the tools to solve business problems. Include at least one open-ended question for crowd-sourced ideas and opportunities.

- Work with your AI champion to surface issues employees may hesitate to raise directly with you. Encourage them to set weekly office hours or meet 1:1 with team members to collect insights, and report back to you.

- Check often whether AI efforts are aligned with team objectives. If your priority is expanding your customer base, do you have the use cases to support it – or are you drifting into experimentation that doesn’t advance your goals? Consider setting time with your AI champion each month to reflect on whether you’re driving the value you set out to.

Reflection helps separate meaningful progress from surface activity. Pairing usage data with comprehension metrics gives leaders a sharper view of where adoption stands and where support is most needed.

The Final Test: Is Your Team Living It?

At the start of this series, we asked what readiness looked like at the organizational level. Now the question is more immediate: Is your team living it?

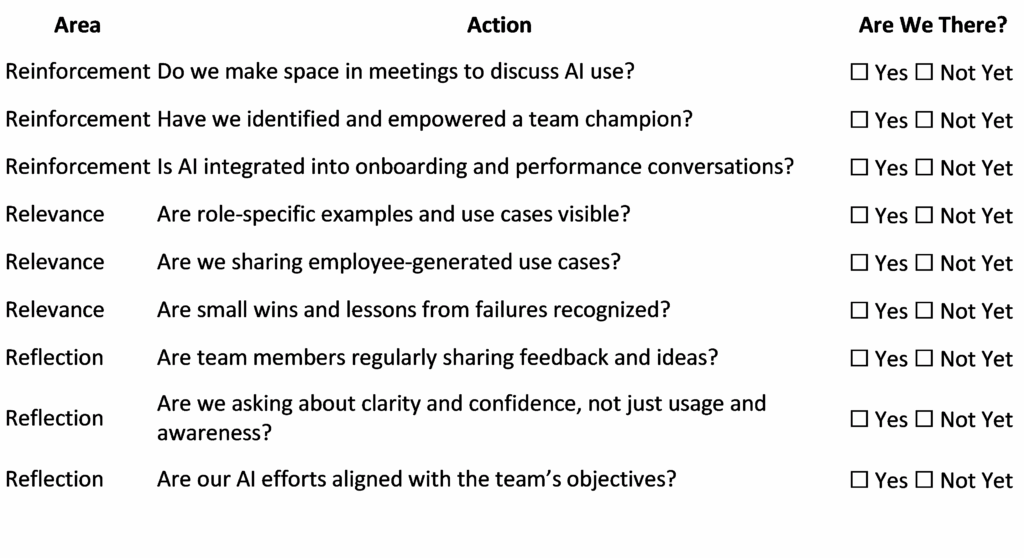

Use this scorecard to check your progress:

This isn’t a one-time exercise. Revisit it monthly – and at a minimum, quarterly. Consider having your AI champion fill it out too, to guard against blind spots.

The Bottom Line

The biggest challenge of AI transformation in 2026 isn’t speed – it’s staying power. The organizations and teams that succeed will be the ones that take the actions above now and treat adoption as an ongoing process, not a one-time push.

Josh McConnell is a VP of Technology based in New York where he helps companies navigate complex narratives at the intersection of innovation, reputation and culture. He brings over 15 years of experience across journalism and corporate comms, with leadership roles at Uber and Xero. As a journalist, he regularly interviewed tech leaders including Tim Cook, Satya Nadella and Jack Dorsey.

Josh McConnell is a VP of Technology based in New York where he helps companies navigate complex narratives at the intersection of innovation, reputation and culture. He brings over 15 years of experience across journalism and corporate comms, with leadership roles at Uber and Xero. As a journalist, he regularly interviewed tech leaders including Tim Cook, Satya Nadella and Jack Dorsey.