The AI Readiness Gap Series: Why Normalization Is the Most Skipped, and Most Essential, Phase of AI Adoption

This is the second installment in a series on what it takes to close the gap between AI investment and tangible business impact.

In the first piece, I argued that the real barrier to AI adoption is not the technology itself. It is the human side of change. You can have the tools, investment and strategic urgency — and still fall short if your people are not ready to come with you.

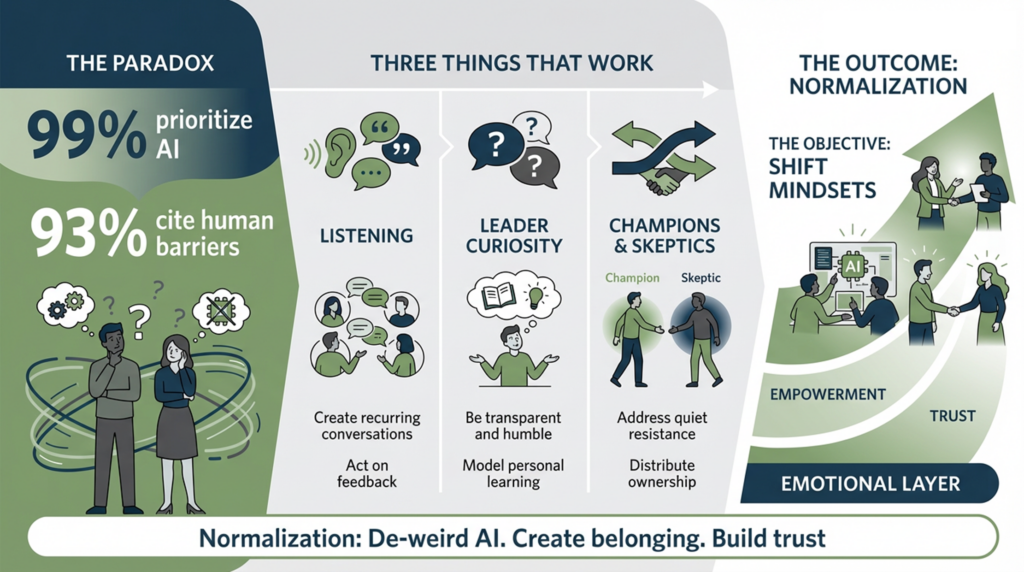

A new data point from Harvard Business Review reinforces just how widespread this challenge has become. In its annual AI & Data Leadership Executive Benchmark Survey, 99% of respondents said investments in data and AI are a top organizational priority.

And yet, 93% identified human issues — culture and change management — as the key challenge to AI adoption, the highest percentage in the survey’s 15-year history.

That is the paradox organizations are facing right now. We have never been more aligned on the importance of AI, and we have never been clearer about what is standing in the way.

So, what do we do about it?

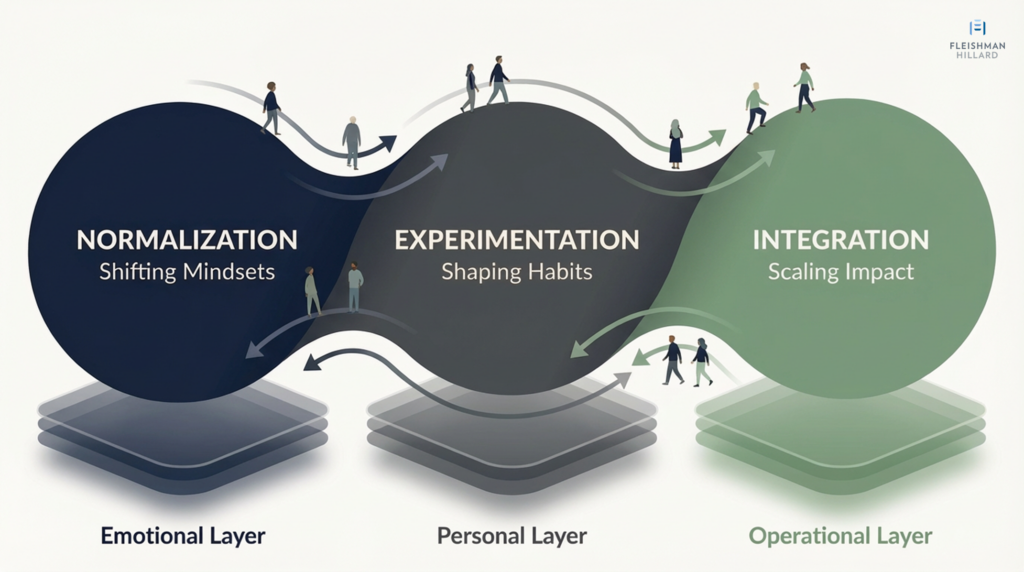

That is what this series is for. In forthcoming posts, I will go deeper into each phase of the AI adoption continuum I introduced in the first piece, starting with the one most organizations rush past: normalization.

What Normalization Means

Normalization is not a communications campaign. It is not a CEO video about the future of work. And it is not a training session scheduled before a platform goes live.

It is the deliberate, ongoing work of helping people feel safe, supported and included as they begin to make sense of AI and what it may mean for their work. It is how organizations “de-weird” the technology, create space for honest questions and begin making AI feel like something that belongs in everyday work rather than something being imposed from above.

Why Normalization Matters

Psychological safety is a critical condition for learning, experimentation and collaboration. When people don’t feel safe, they don’t ask questions, test ideas or admit what they don’t know. They comply quietly, or they quietly disengage. Neither is adoption.

The goal of normalization is to close the distance between where people are emotionally and where the organization needs them to be. Some employees will move quickly and begin experimenting right away with the tools they now have at their disposal.

Others will be unfamiliar, skeptical or unsure what this shift means for their role, their value or their future. For those employees especially, adoption does not begin with training. It begins with the feeling that engaging with AI will not make them look foolish, irrelevant or behind.

And creating that kind of readiness requires three things done well.

Three Things That Actually Work in the Normalization Phase

1. Create space – and systems – for listening.

The biggest mistake organizations make in this phase is starting with all the answers. They launch the platform, send the announcement, schedule the training – and assume those things alone will shift mindsets and change behavior.

They won’t.

What creates the conditions for readiness is being heard first. At its core, this means building an ongoing conversation about AI across the organization – one that gives employees regular, low-pressure spaces to surface questions and ideas, voice concerns and get honest responses.

That can take several forms: Office hours. Small-group sessions. Open Q&A. Pulse surveys and live polls. Not as symbolic gestures, but as mechanisms for shaping how AI gets introduced into the work people actually do.

And if you’re going to ask people to take the time to engage, you must show that what they share matters. The only thing worse than not asking employees for feedback is asking and then ignoring what you hear.

That’s why listening cannot be treated as a singular event. It has to be built into the rollout itself.

One all-hands meeting is not an AI listening strategy. Listening has to be structured, recurring and visibly tied to action. When people see their input reflected in how your AI transformation evolves, trust grows. When they don’t, skepticism hardens.

2. Coach leaders to show curiosity.

This may be the most uncomfortable shift for many leaders — and one of the most important.

We often expect leaders to project confidence during change: Here’s where we’re going. Here’s why it’s the right call. Here’s what I need you to do. In many transformations, that kind of clarity is reassuring. But AI introduces a level of uncertainty that makes a different posture more effective.

Much of this is still unfolding, and employees know that. When leaders over-index on certainty, it can unintentionally create distance. What tends to build trust instead is transparency – a willingness to share what is clear, what is still emerging and what they themselves are learning along the way.

Leaders who say, Here’s what I tried last week. Here’s where it didn’t go as expected. Here’s what I’m still figuring out, give their teams permission to approach AI the same way: openly, curiously and without needing to have everything resolved upfront. In doing so, they model the kind of learning culture this moment requires.

And this does not have to be overly formal. It can be as simple as a leader taking a few minutes in a team meeting or a 1:1 to share how they have been using AI, where it has helped, where it has fallen short and then asking whether others are seeing similar use cases or running into similar issues. Moments like that make AI feel less abstract and more like part of how the team solves problems and gets work done.

A little humility goes a long way here. Saying, We don’t have all the answers yet, but we want to understand what you’re seeing and what you need, helps build the trust and reciprocity that make people more willing to engage over time.

3. Engage both champions and skeptics.

Most AI rollouts activate champions. Fewer engage skeptics.

That’s a missed opportunity – and often a source of quiet resistance that never gets addressed.

Champions build belief. They carry peer influence, spread early momentum and make it socially safe to try.

But skeptics matter too. They ask the questions others are hesitant to raise, stress-test the strategy and identify blind spots the optimists have not yet considered.

And both groups need to be identified across the organization. The concerns people have, the language that resonates, and the use cases that feel relevant will differ by role, function, team and location. A centralized group of AI-forward employees alone will not catch those nuances.

Bring both into the process. Involve them in reviewing messaging before it goes out. Ask them to serve as ears on the ground within their teams, surfacing the quiet hesitations people may not yet be voicing openly. Invite them to curate real-world examples, flag what feels off and help co-create the evolving story – not just receive it.

When the people most likely to champion the change and the people most likely to question it both have a hand in shaping the narrative, two things happen: the strategy gets sharper, and trust grows. That makes the rollout more credible, because it starts to reflect the reality of how different parts of the organization will actually experience it.

How You Know It’s Working

Normalization isn’t a box you check. It’s a condition you build. Here are three signals that tell you the work is landing:

- Safety and trust are growing. Survey data and anecdotal feedback show people feel comfortable asking questions about AI – even uncomfortable ones.

- Ownership is being distributed. Champions and skeptics are in the room, giving honest input, not just nodding along.

- Early participation is building. Attendance at office hours, demos and opt-in sessions is growing – not because it’s mandatory, but because people are curious and finding value from what you’re sharing.

These signals matter because they show people are getting more comfortable – asking questions, engaging more openly, and beginning to see where AI might fit into their work.

But that does not mean everyone is in the same place. In most organizations, some people will already be experimenting or integrating AI into parts of their workflow, while others are still making sense of what this technology means for their role, their value and their day-to-day work.

That is why normalization matters. It is not something you complete before moving on. It is the ongoing foundation that helps leaders understand where people are, how they are experiencing the change and what they need next as the work continues.

Organizations should be moving. But they need to keep listening as they do. That is what makes adoption more coherent, more durable and more likely to spread beyond the early adopters.

Margaux Vega Is a FleishmanHilllard senior lead and strategist for Fortune 500 companies, driving integrated communications from strategy to shape brand perception at scale. At the forefront of new ways of communications thinking, Margaux is focused on visibility and influence in an AI-first landscape.

Margaux Vega Is a FleishmanHilllard senior lead and strategist for Fortune 500 companies, driving integrated communications from strategy to shape brand perception at scale. At the forefront of new ways of communications thinking, Margaux is focused on visibility and influence in an AI-first landscape.

Matt Rose is the Americas Lead for Crisis, Issues & Risk Management. An SVP & Senior Partner in New York, he brings more than 30 years’ experience in advising organizations on crisis and issues management, risk mitigation, and reputation recovery. He has guided companies through reputational crises, labor issues, regulatory challenges, ESG controversies, and high-profile litigation.

Matt Rose is the Americas Lead for Crisis, Issues & Risk Management. An SVP & Senior Partner in New York, he brings more than 30 years’ experience in advising organizations on crisis and issues management, risk mitigation, and reputation recovery. He has guided companies through reputational crises, labor issues, regulatory challenges, ESG controversies, and high-profile litigation.

Allison Koch MS, RD, CSSD, LDN is a vice president in FleishmanHillard’s Chicago office, where she provides nutrition communications counsel for clients. A registered dietitian with more than 20 years of experience, she’s passionate about helping brands connect science and storytelling to inspire healthier choices and stronger consumer trust.

Allison Koch MS, RD, CSSD, LDN is a vice president in FleishmanHillard’s Chicago office, where she provides nutrition communications counsel for clients. A registered dietitian with more than 20 years of experience, she’s passionate about helping brands connect science and storytelling to inspire healthier choices and stronger consumer trust.